Large Language Models (LLMs) like ChatGPT demonstrate impressive capabilities in natural language understanding and generation. But they have a fundamental limitation: they operate as closed systems trained on static datasets.

They lack real-time awareness of new information, struggle with factual accuracy, and cannot access private or domain-specific data. For companies building AI-driven products across legal, IT, healthcare, finance, and customer support — this is a significant constraint.

Key Takeaways

- Retrieval-Augmented Generation (RAG) bridges the gap between static LLM knowledge and real-time, domain-specific data

- Vector databases excel at fast semantic search across unstructured text

- Knowledge graphs excel at structured reasoning, relationship traversal, and precision

- The hybrid approach — combining both — delivers the most accurate and contextual results

- Graphshare implements this hybrid model natively through Neo4j

What Is RAG and Why Does It Matter?

Retrieval-Augmented Generation (RAG) addresses the LLM knowledge gap by combining model responses with a retrieval system that fetches relevant resources at inference time.

Instead of relying solely on the model's static training data, RAG enables dynamic access to fresh, grounded information — improving both response relevance and accuracy.

The core question becomes: how should relevant, updated knowledge be retrieved for LLM consumption? Two prominent approaches have emerged.

Vector Databases in a Nutshell

A vector database functions like an intelligent librarian, organising information through "vectors" — numerical codes that capture data meaning, created by models like BERT or SentenceTransformers.

Similar concepts produce vectors positioned close together. Unrelated concepts position farther apart. Distance and angle between vectors gauge semantic similarity.

Crucially, everything converts to vectors — not remaining as text — enabling mathematical similarity operations across the entire database.

Knowledge Graphs in a Nutshell

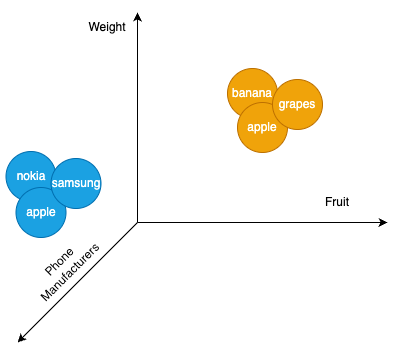

A knowledge graph organises information as nodes (entities like people, companies, or objects) connected by edges (relationships such as "produces," "is a type of," "relates to").

This creates a digital map of interconnected ideas and facts. For example, "Company: Apple" connects to "Phone: Model Pro" via the "PRODUCES" edge — providing explicit, navigable connections between data points.

VectorRAG vs. GraphRAG: When to Use Each

When VectorRAG Excels

For a news aggregator finding articles matching "latest tech breakthroughs," VectorRAG is the clear winner. It is fast, scalable, and excellent at understanding text meaning.

Strengths:

- Text-based content requires minimal preprocessing

- Pre-trained models transform articles into vectors within seconds

- Modern platforms (Pinecone, Weaviate, Chroma) enable quick deployment

- No specialised technical expertise required

Limitation: Vectorisation risks losing deep relational understanding. It cannot easily establish cause-and-effect connections or follow fact chains across articles.

When GraphRAG Shines

GraphRAG shines in structured, relational domains requiring precision and reasoning.

Strengths:

- Superior reasoning and cause-effect tracing

- Follows complex information chains across entities

- Provides explainable, auditable results

Limitation: Building knowledge graphs requires substantial planning, domain expertise, and ongoing maintenance.

The Best of Both Worlds: Hybrid RAG

The optimal approach combines both methods:

- Vector search quickly identifies semantically relevant content to initiate the LLM conversation

- Knowledge graph traversal then deepens the response with structured relationships and connected context

- The LLM produces a comprehensive, grounded response informed by both semantic and relational intelligence

This hybrid model ensures LLMs retrieve relevant information while understanding broader context — producing more accurate and meaningful responses.

How Graphshare Implements Hybrid RAG

Graphshare, powered by Neo4j, implements this hybrid approach natively. Neo4j supports both native knowledge graph functionality and built-in vector search capabilities.

This means semantic matching and complex relationship mapping happen within a single ecosystem. LLMs benefit from both semantic understanding and relational intelligence — delivering not just answers, but complete narratives.

The future of enterprise AI is not choosing between vector databases and knowledge graphs. It is combining them intelligently to ground LLM outputs in both meaning and structure.